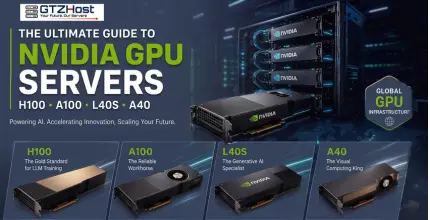

At GTZHOST, we provide high-performance NVIDIA GPU solutions across six continents, ensuring low latency and maximum throughput. But which GPU is right for your specific workload? Let's break down the giants of the industry.

1. NVIDIA H100: The Gold Standard for LLMs

The NVIDIA H100 Tensor Core GPU is designed for one thing: massive-scale AI. Built on the Hopper architecture, it is significantly faster than its predecessors for training Transformer models.

Best For: Training Large Language Models (like GPT-style bots), Deep Learning, and massive AI clusters.

GTZHOST Advantage: We offer configurations up to 8x NVIDIA H100 with NVLink and 1.5TB RAM, ideal for high-end enterprise needs in locations like Dallas and Incheon.

2. NVIDIA A100: The Reliable Workhorse

Before the H100, there was the A100, and it remains a powerhouse for versatile data center workloads. It offers incredible memory bandwidth, making it a favorite for scientific simulations and data engineering.

Best For: Data analytics, AI inference, and high-performance computing (HPC).

Key Specs: Available with 80GB VRAM, providing the memory overhead needed for complex datasets.

3. NVIDIA L40S: The Generative AI Specialist

If your focus is on AI Inference (running the models) rather than just training them, or if you are into high-end professional graphics, the L40S is the current trending choice. It bridges the gap between pure compute and high-end rendering.

Best For: Generative AI, 3D Graphics Rendering (Omniverse), and Video Engineering.

Why Choose L40S?: It offers a more cost-effective entry point than the H100 while delivering stellar performance in multi-modal AI tasks.

4. NVIDIA A40: The Visual Computing King

The A40 is the evolution of the professional workstation GPU. It is optimized for visual computing and virtual desktop infrastructure (VDI).

Best For: Cloud gaming, AR/VR development, and large-scale architectural rendering.

Global Reach: We have high-availability A40 clusters in Sydney, Melbourne, and Perth, perfect for the Oceania market.

Comparison: Which One Fits Your Budget?

| GPU Model | Best Use Case | Key Strength | Starting Price (Approx) |

|---|---|---|---|

| H100 | LLM Training | Transformer Engine | $1,945 - $22,000+ |

| A100 | Data Science | 80GB VRAM | $1,341 - $1,850+ |

| L40S | GenAI / Graphics | Versatility | $1,438+ |

| A40 | Rendering / VDI | Visual Computing | $1,000+ |

Why Deploy Your GPU Infrastructure with GTZHOST?

Choosing the right hardware is only half the battle. The environment where that hardware lives determines your actual uptime and speed.

Global Footprint: With data centers in the USA (Dallas, Miami), Europe (Frankfurt, Stockholm, Luxembourg), and Asia-Pacific (Incheon, Sydney), we place your compute power closer to your users.

Bare Metal Performance: Unlike virtualized cloud instances, our GPU servers are Bare Metal. You get 100% of the hardware resources with no "noisy neighbors."

High-Speed Networking: Our H100 and L40S clusters feature networking speeds up to 200Gbps, eliminating bottlenecks during data-heavy training sessions.

Final Thoughts

Whether you are a startup training your first AI model or an enterprise scaling a global rendering farm, GTZHOST has the NVIDIA infrastructure to power your vision. Ready to scale? Explore our GPU Server Configurations and deploy your instance in minutes.

View GTZ Host GPU Server ConfigurationsFrequently Asked Questions (FAQ)

Which NVIDIA GPU is best for training LLMs?

The NVIDIA H100 is considered the gold standard for Large Language Models (LLMs). Built on the Hopper architecture, it offers incredible speeds for training Transformer models, especially in high-end configurations like our 8x H100 NVLink servers.

What makes the L40S different from the A100?

While the A100 is a reliable workhorse optimized for deep learning and data analytics due to its 80GB VRAM, the L40S specializes in Generative AI inference and 3D graphics rendering, making it a highly versatile and cost-effective option.

Why should I choose Bare Metal over Cloud for GPU instances?

Unlike virtualized cloud instances where resources can be shared, our GPU servers are 100% Bare Metal. This means you get full access to the hardware resources with zero "noisy neighbor" interference, ensuring maximum performance for your AI workloads.

Where are GTZHOST GPU servers located?

We provide high-performance NVIDIA GPU solutions across six continents. Key locations include Dallas and Miami in the USA; Frankfurt, Stockholm, and Luxembourg in Europe; and Incheon and Sydney in the Asia-Pacific region, ensuring global low latency.